While the former may be okay for input which has high probability of matching frequencies, the latter can adapt to any input. You can use a fixed encoding scheme based on the likely frequencies of characters (such as English text), or you can use adaptive Huffman encoding which changes the encoding scheme as characters are processed and frequencies are adjusted. Arithmetic coding is used in the bi-level image compression standard JBIG, and the document. The total bytes used is therefore 1152 + 128, or 1280 bytes, representing a compression ratio of 62.5%. The table itself is often Huffman encoded (e.g. However, the e characters have been reduced from 8 bits to 1, meaning they take up 1024 bits, or 128 bytes. You can see that half the characters have been expanded from 8 bits to 9, giving 9216 bits, or 1152 bytes. If you have n symbols you can just use k log n bits and arbitrarily assign each symbol to one distinct combination of these k bits). (By the way, it is quite easy to minimize the maximum codeword length. Now let's say we represent e as a single 1-bit and every other letter as a 0-bit followed by its original 8 bits (a very primitive form of Huffman). Huffman coding does not minimize the maximum codeword length. With 8 bits for code, that's a full 2048 bytes used in uncompressed form. It's the dual effect of having the most frequent characters using the shortest bit sequences that gives you the savings.įor a concrete example, let's say you have a piece of text that consists of 1024 e characters and 1024 of all other characters combined. Compare that to Huffman codes where each code is unique, as soon as you discover the encoding for a symbol in the input, you know that that is the transmitted symbol because it is guaranteed not to be the prefix of some other symbol. This increases the inefficiency of transmitting data using morse as you need a special symbol (pause) to signify the end of transmission of one code.

In morse code for instance A = dot dash and J = dot dash dash dash, how do you know where to break reading the code. The prefix code part is useful because it means that no code is the prefix of another. Thus the symbols most likely to be in your data use the least amount of bits in the encoding, making the coding efficient.

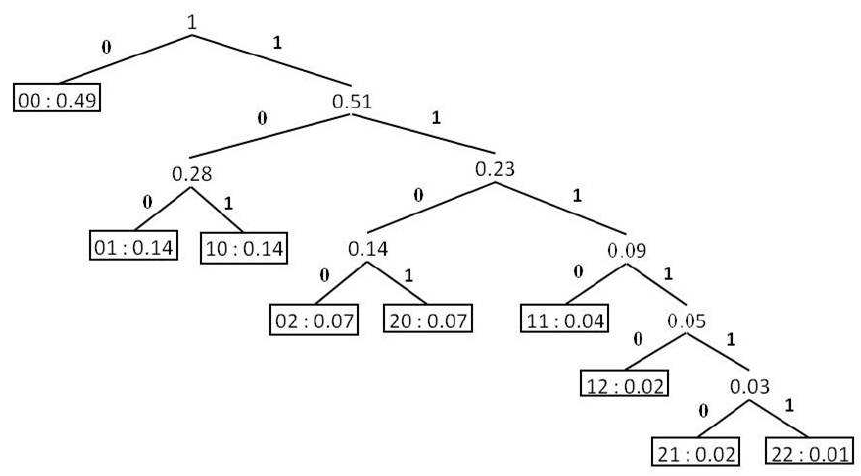

The statistics are important because the symbols with the highest probability (frequency) are given the shortest codes. This however only applies to that set of documents as the codes you end up are dependent on the probability of the tokens/symbols in the original set of documents. Given some set of documents for instance, encoding those documents as Huffman codes is the most space efficient way of encoding them, thus saving space. They are space efficient given some corpus To get the Huffman code for any character, start from the node corresponding to the the character and backtrack till you get the root node. Starts by combining the two least weight nodes into a tree which is assigned the sum of the two leaf node weights as the weight for its root node.įor example consider below binary tree where E and T have high weights ( as very high occurrence ) This is very fundamental concept in Information theory.Ī greedy approach to construct Huffman tree for n characters is as follows: You might have observed that postal code and STD codes for important cities are usually shorter ( as they are used very often ). Suppose you want to assign 26 unique codes to English alphabet and want to store an english novel ( only letters ) in term of these code you will require less memory if you assign short length codes to most frequently occurring characters. Finally, this paper insight to various open issues and research directions to explore the promising areas for future developments.If you assign less number or bits or shorter code words for most frequently used symbols you will be saving a lot of storage space. A comparative analysis is also performed to identify the contribution of reviewed techniques in terms of their characteristics, underlying concepts, experimental factors and limitations. In order to analyze how DC techniques and its applications have evolved, a detailed survey on many existing DC techniques is carried out to address the current requirements in terms of data quality, coding schemes, type of data and applications. As DC concepts results to effective utilization of available storage area and communication bandwidth, numerous approaches were developed in several aspects. Due to limited resources, data compression (DC) techniques are proposed to minimize the size of data being stored or communicated. Explosive growth of data in digital world leads to the requirement of efficient technique to store and transmit data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed